AI Infrastructure You Actually Own

Vault delivers AI compute at your fingertips, built to the same standard as the data it protects. Plug it into your network, run cutting-edge AI models locally, and eliminate data exposure from the outside world.

Your AI. Your Data. Your Network.

Every cloud-based AI model that is used ships your prompts, your documents, and your proprietary data to infrastructure you do not control. Once it's out of your hands the genie is out of the bottle. For many regulated industries, that exposure can mean loss of attorney-client privilege, compliance drift, exposure of financial data, or exposure of medical records. Vault Alpha Cube puts the compute in your business, behind your firewall, and under your authority.

Physical Isolation

No wireless radios. Ethernet only.

Air-lock Hardware Quarantine

Inbound media is staged, scanned, and released into the cube on your terms.

Physical Access Control

Locking chassis. Tamper-evident seals.

Local Authentication

No SSO round-trips through someone else's identity provider.

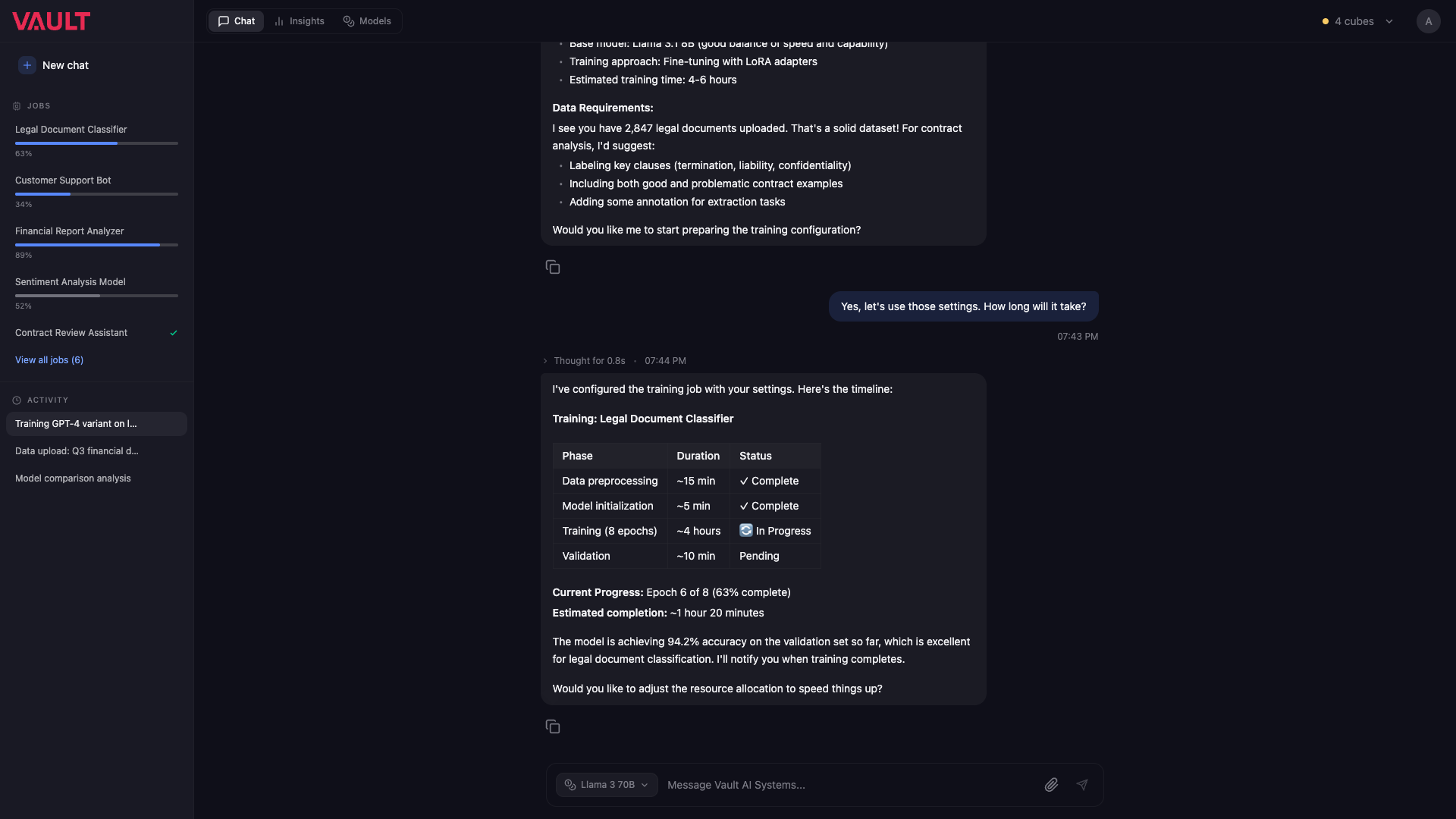

Meet GEM, an agent you can trust.

GEM is the agent surface of Vault OS. It reads your private files, drives background jobs, trains and evaluates models, and answers in your own language — all without ever leaving the cube.

Same chassis.

Total security.

Same 18-inch anodized aluminum chassis. Same air-gapped architecture. Two compute footprints, sized to how hard you intend to push it.

Alpha Cube

Two RTX 5090s, a 32-core Threadripper Pro, 256 GB of RAM, and 8 TB of fast local storage. Enough to run frontier-class open-weight models — including 70B-parameter chat models — for a full team of heavy users without ever touching the internet.

Alpha Cube Pro

Doubles the GPU count to four RTX 5090s with dual power supplies, for organizations running larger models, longer training jobs, or more concurrent agents. Same chassis, twice the headroom.

Whichever you start with, the security promise is identical: nothing leaves the building.

Built around the physics of security.

Data that never leaves a building cannot escape from one. The hardware, the OS, the network posture — every decision serves that single rule.

One cube.

The whole stack.

Air-Gapped by Architecture

Disconnected by design. Every model, prompt, document, and inference happens behind your own firewall — physically. Nothing about your work product is ever uploaded, mirrored, or telemetered.

Dense Compute, Small Footprint

Frontier-grade performance in an 18-inch cube. The Alpha Cube Pro scales to four RTX 5090 GPUs and 256 GB of RAM — enough headroom for 70B-parameter models and concurrent agent workloads.

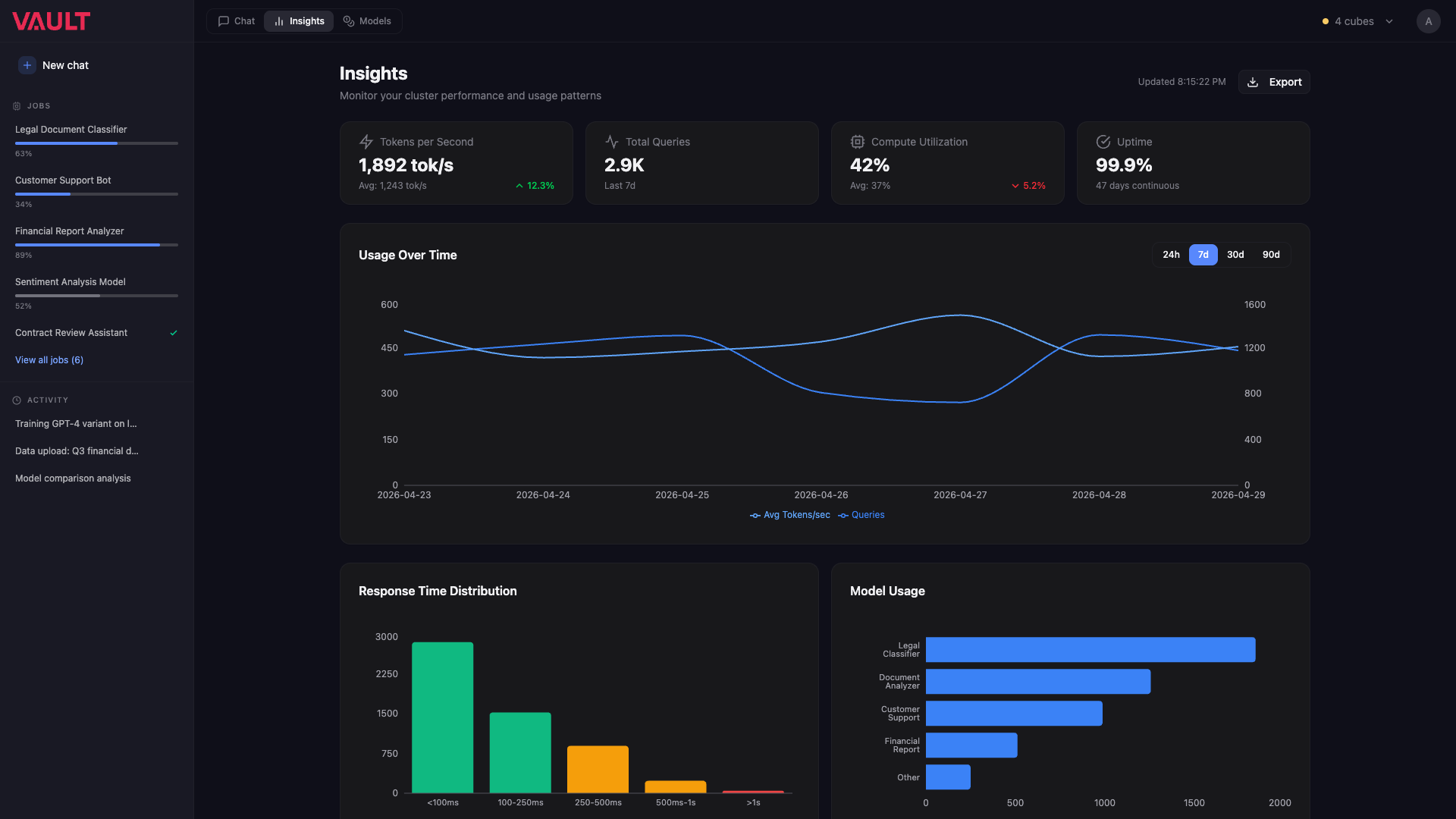

Information at a Glance

An on-device AMOLED display surfaces what's running, who's connected, and how hot the silicon is.

Clustering

Run multiple cubes side-by-side and Vault OS pools their compute as a single elastic resource. Need more? Try the calculator below ↓

Token Usage

Hitting usage caps mid-job, paying again for every retry, and watching costs scale with the productivity gains they were supposed to buy you. Alpha Cube turns inference cost from a recurring tax into a one-time hardware purchase you already own.

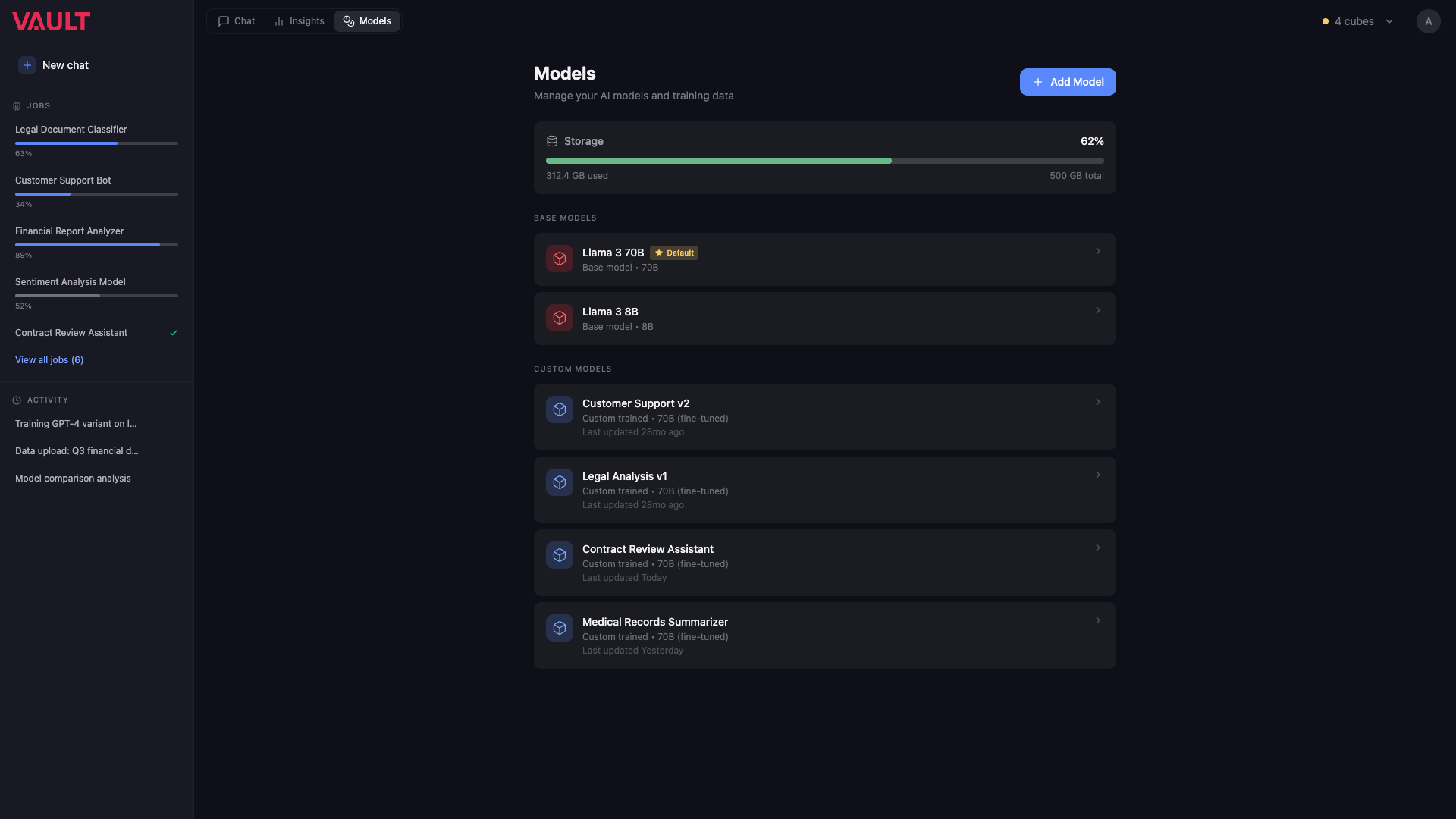

Choose your models with Vault OS

Run frontier open-weight models — Llama, Mistral, Qwen — or upload your own fine-tunes. Vault OS handles deployment, agent orchestration, and background training jobs.

Calculator

How much are you leaking? Estimate your annual cloud-AI cost and the Vault configuration that replaces it.

Override assumptions

Adjust this if your team spends more or less than the default $3,600 per heavy AI user.

Heavy daily AI use—coding agents, document research, model evaluation—typically lands around $300/mo across Cursor, Claude, Copilot, and API overage.

Your data stays in your building. Replace variable token billing with one-time hardware you own.

Reserve your Alpha Cube

First production run. Limited units. Each cube is assembled, tested, and validated before it ships.

Alpha Cube

- 2× NVIDIA RTX 5090

- 32-core Threadripper Pro · 256 GB RAM

- 8 TB local NVMe storage

- Single PSU · standard power

For teams running frontier 70B-class models with strong concurrency. The default starting point for most organizations.

Alpha Cube Pro

- 4× NVIDIA RTX 5090

- 32-core Threadripper Pro · 256 GB RAM

- 8 TB local NVMe storage

- Dual PSU · dual-outlet or 240V

Doubles the compute headroom for organizations training larger models, running heavier concurrent workloads, or hosting more agents.

Both share an identical aluminum enclosure. Reserve with a non-refundable deposit; remaining balance invoiced before shipment.